Working alongside AI agents, part 2

This is a follow-up to my previous piece about declarative interfaces and how they adapt to a user's needs. Using the example of an ad-buying workflow, we explored how user declarations can evolve into lightweight, ephemeral apps within apps—spinning up a customized UI in seconds and disappearing when the job is done.

Here, I dive deeper into the ramifications of those new capabilities by imagining them applied across an entire organization. The goal shifts from a single user prompting a single agent to a unified digital workforce operating autonomously in the background.

Transparency and attention

In my previous example, visibility was solved with a readable audit log. The user could inspect the AI's logic step-by-step and understand why it did what it did.

Today, that seems quaint – model output now exceeds the limits of human attention and memory. As agents handle hundreds of operations, the design challenge fundamentally shifts to state management. How do we abstract the activity while keeping the user informed of the overall health of an invisible system?

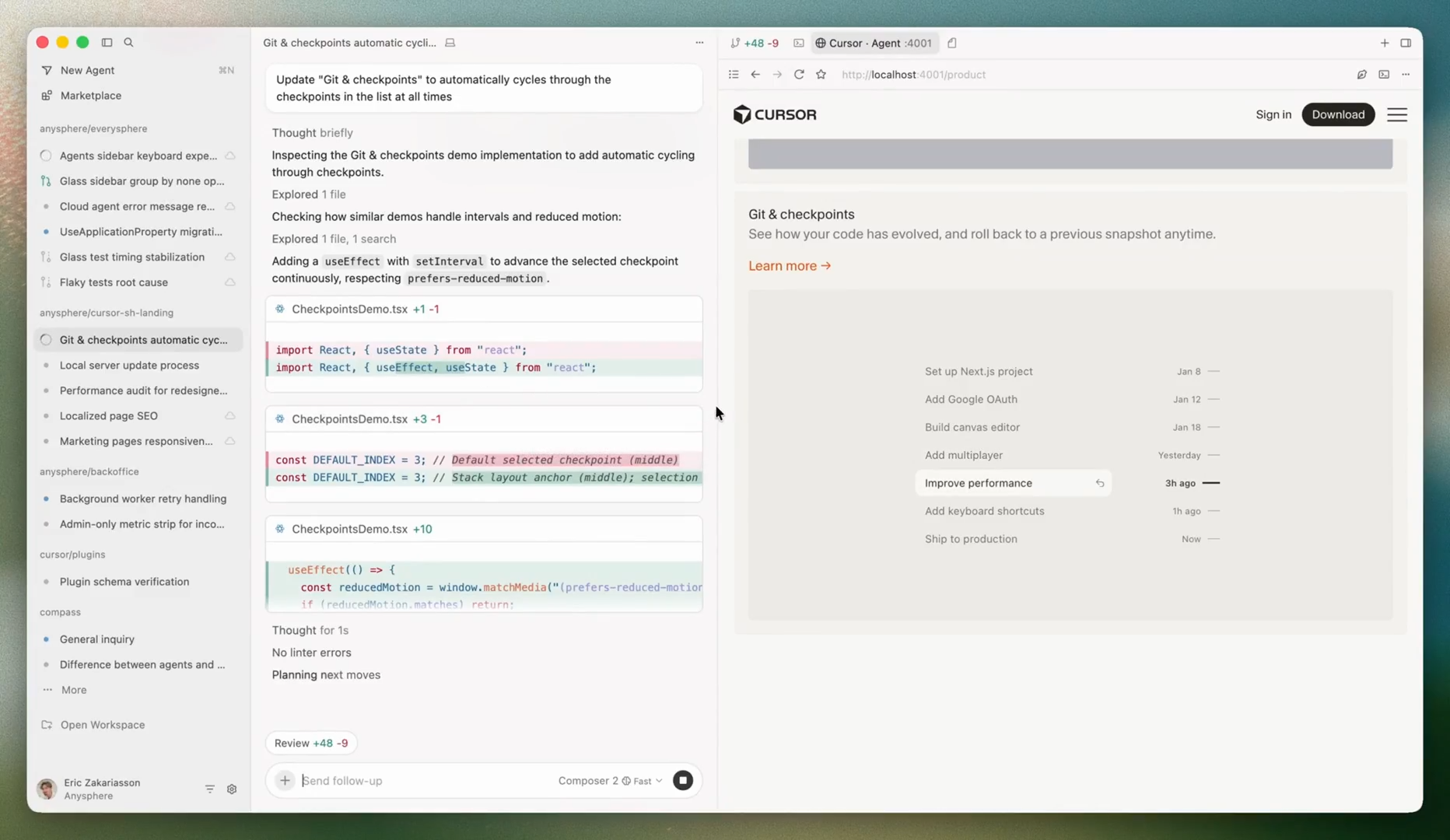

Code icebergs in a Cursor window

AI coding tools have somewhat solved this problem. They show the model output as it writes code, but you don’t even look at code in full anymore. It flies past you in the chat view. You can dive deeply if you want, but by default it shows the last five lines or whatever – a code iceberg.

We have to move from a live feed of every action to an overview that only demands attention when necessary. Users need to feel confident that the system is humming along without needing to micromanage it.

Instead of traditional static loading bars or lists, the dashboard uses subtle animations to indicate active background agent processing across different portfolios. Clicking into a specific purchase journey expands to show the current phase, intentionally obscuring the raw data exchange unless the user explicitly drills down.

This ability to oversee effectively was once squarely in the realm of managers and executives. But as people have agentic employees of their own, we may draw lessons from other methods of management. The next generation of knowledge workers might look to real-time feedback loops of military innovation or the handoffs of a French restaurant's kitchen.

Orchestration

Earlier, we looked at a tidy, linear handoff: a Product Manager agent passes requirements to a Designer agent, who passes specs to an Engineer agent. Lots of use cases aren’t that cleanly separated.

Legal drafting, for example, requires a complex, event-driven architecture. Let's say a litigation partner uploads a batch of trial records. This event triggers a Coordinator agent to parse the court's requirements and filing deadlines. At the same time, a Research agent identifies precedents while a Citation-management agent checks the work.

The design challenge is the connective tissue. How do we design the logic of how agents share context and hand off state? What happens when linear isn’t enough?

If the Research agent flags something, the orchestration layer must ensure the Writing agent adjusts the arguments and the Citation-management agent removes the obsolete case. The interface needs to map these complex, non-linear relationships in a way that a human can actually comprehend. (Also, this may be but one of a dozen such cases for which that human is now responsible.)

An interactive node-based visual. A structured payload (the trial records) enters the system and is passed between nodes. It demonstrates how autonomous systems maintain shared context and handle parallel processing.

Trust

Before an agent executes an immutable action—like, say, approving a production deployment—it must pause and ask for confirmation. This builds trust. We know this from software QA: exception handling is the single most critical design pattern.

Exceptions, like a failed regression test or a security vulnerability, are inevitable. When a probabilistic system hits a snag it needs to gracefully degrade instead of stopping or crashing.

The UI highlights the exact discrepancy, provides the agent's confidence score and its underlying reasoning, and gives the human manager declarative options.

The system must flag the anomaly and present the human with a clear, localized intervention point. Here, the human resumes the role of individual contributor. The system needs to expose the full iceberg, so to speak, and reveal the necessary controls we’ve worked so hard to reduce and automate away.